MonX v1.0

The perceptual interface layer of the AIXR system. A hardware-software stack enabling embodied intelligence to perceive and interpret the physical environment from a first-person perspective.

STATUS: ACTIVE PROTOTYPE

Multimodal Input

Disembodied AI limitations. Server-bound models lack physical context and situated awareness. MonX provides the necessary embodiment for AI to function within human-centric environments.

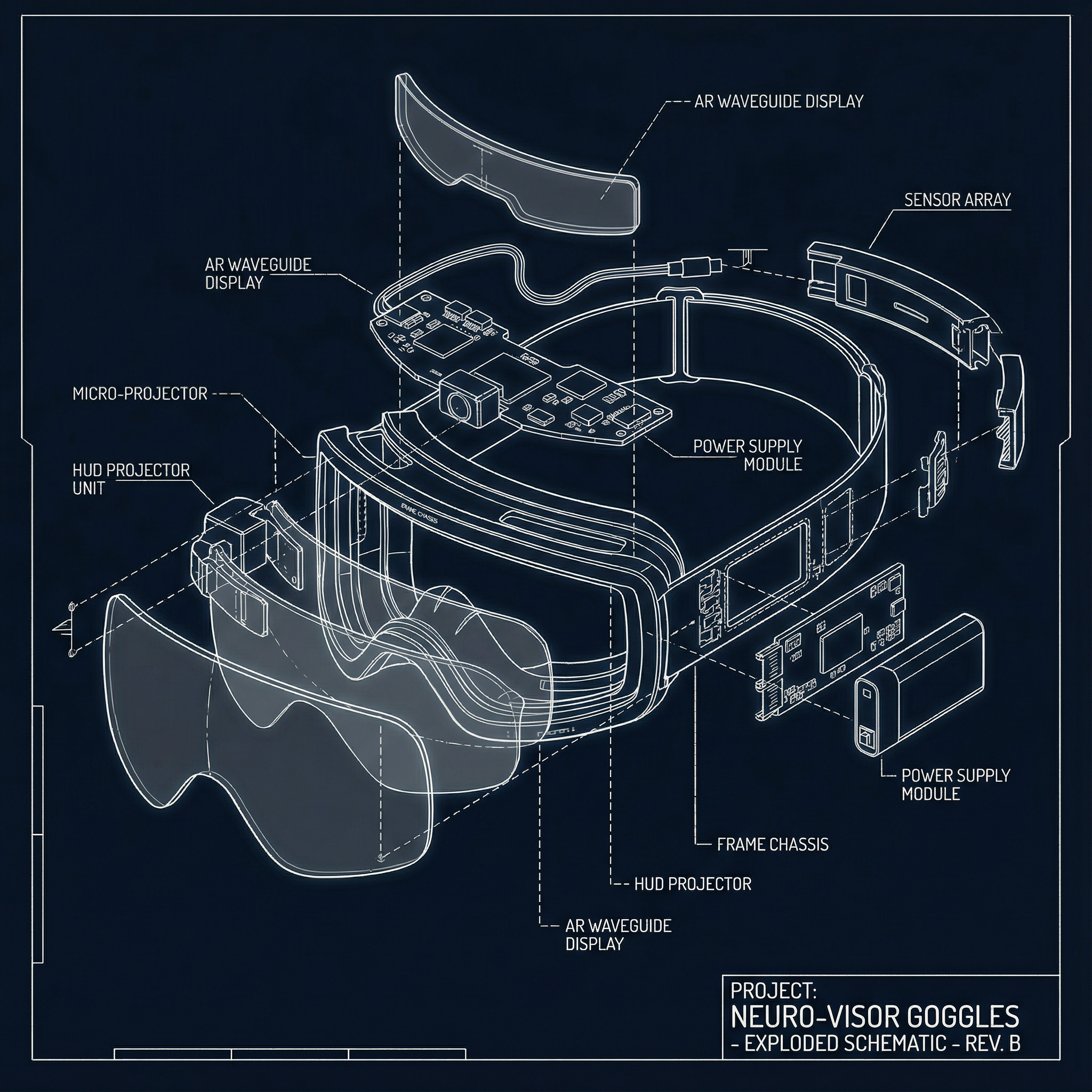

The MonX Terminal

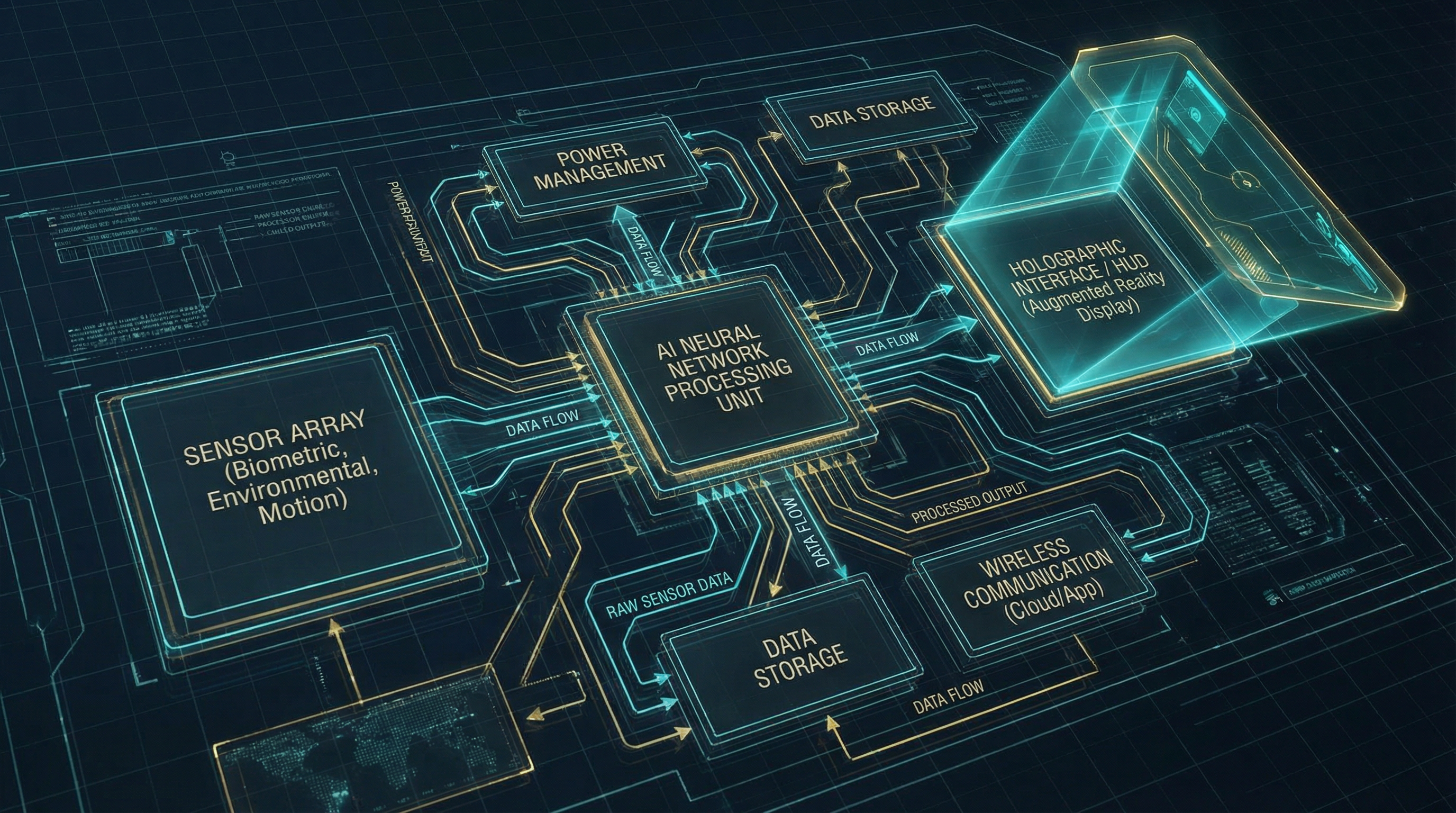

Terminal architecture. A local processing node capturing multimodal input (gaze, audio, biosignals) to facilitate context-aware spatial computing and environmental control.

The Interface

Spatial Intelligence Layer. An adaptive HUD interface overlaying semantic data onto physical infrastructure, creating a seamless mediation layer between human perception and digital systems.

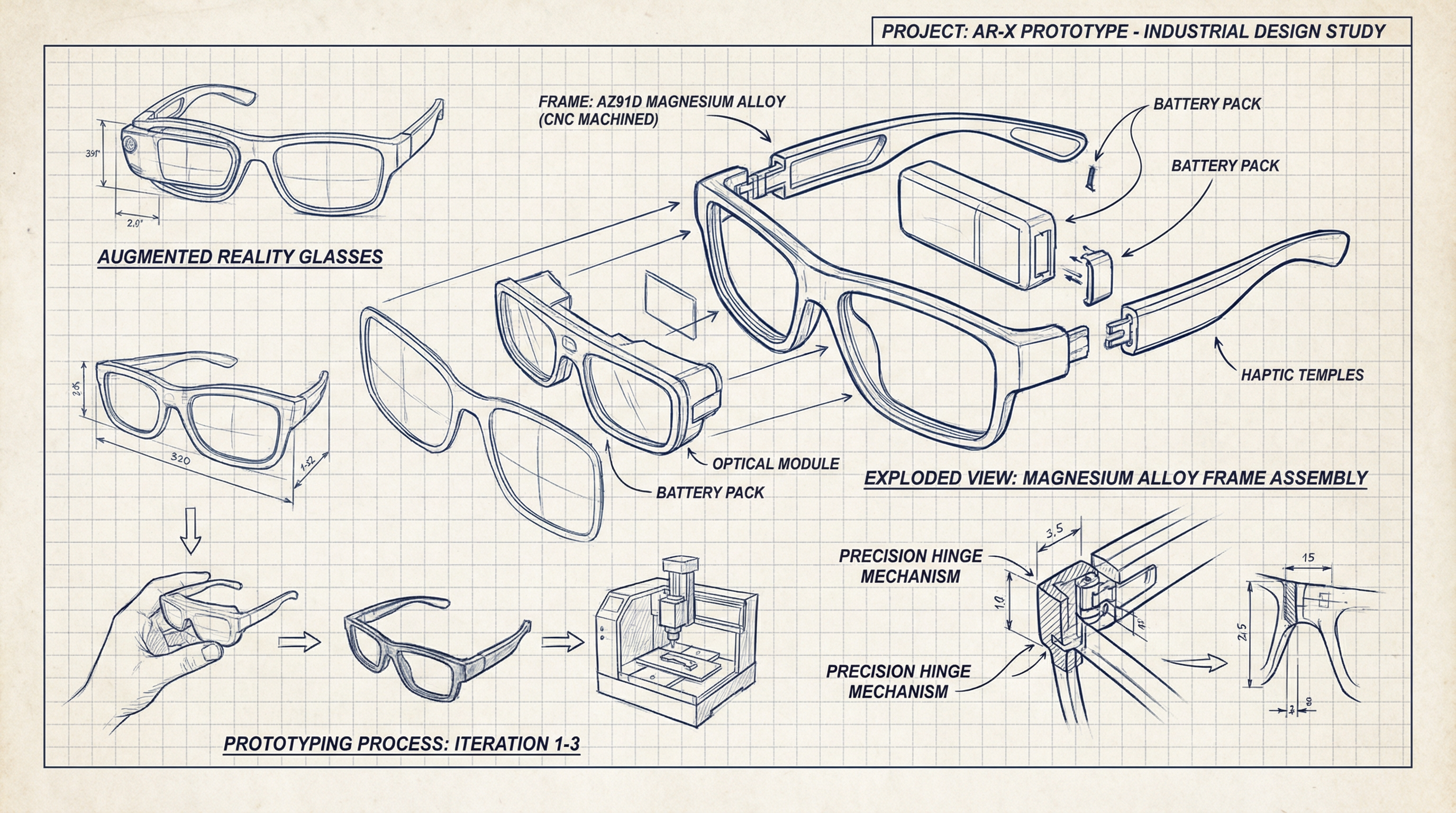

Architectural Thinking in Hardware

The design process of MonX follows an architectural approach: structure first, then skin. We iterated through 50+ form factors to balance weight distribution, thermal management, and aesthetic minimalism.

Exploded Axonometric

Deconstructing the device into modular components allows for rapid sensor upgrades without redesigning the entire chassis.

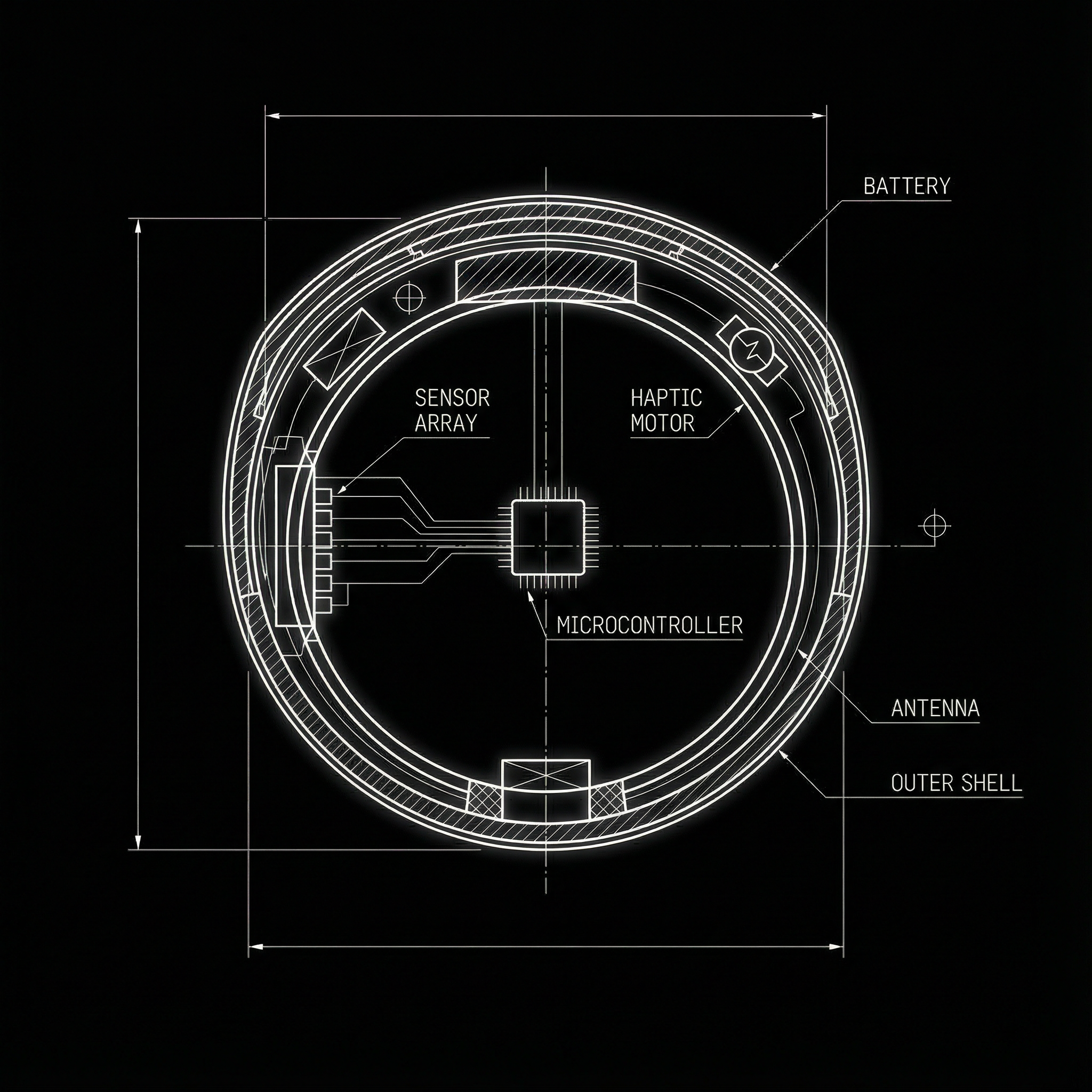

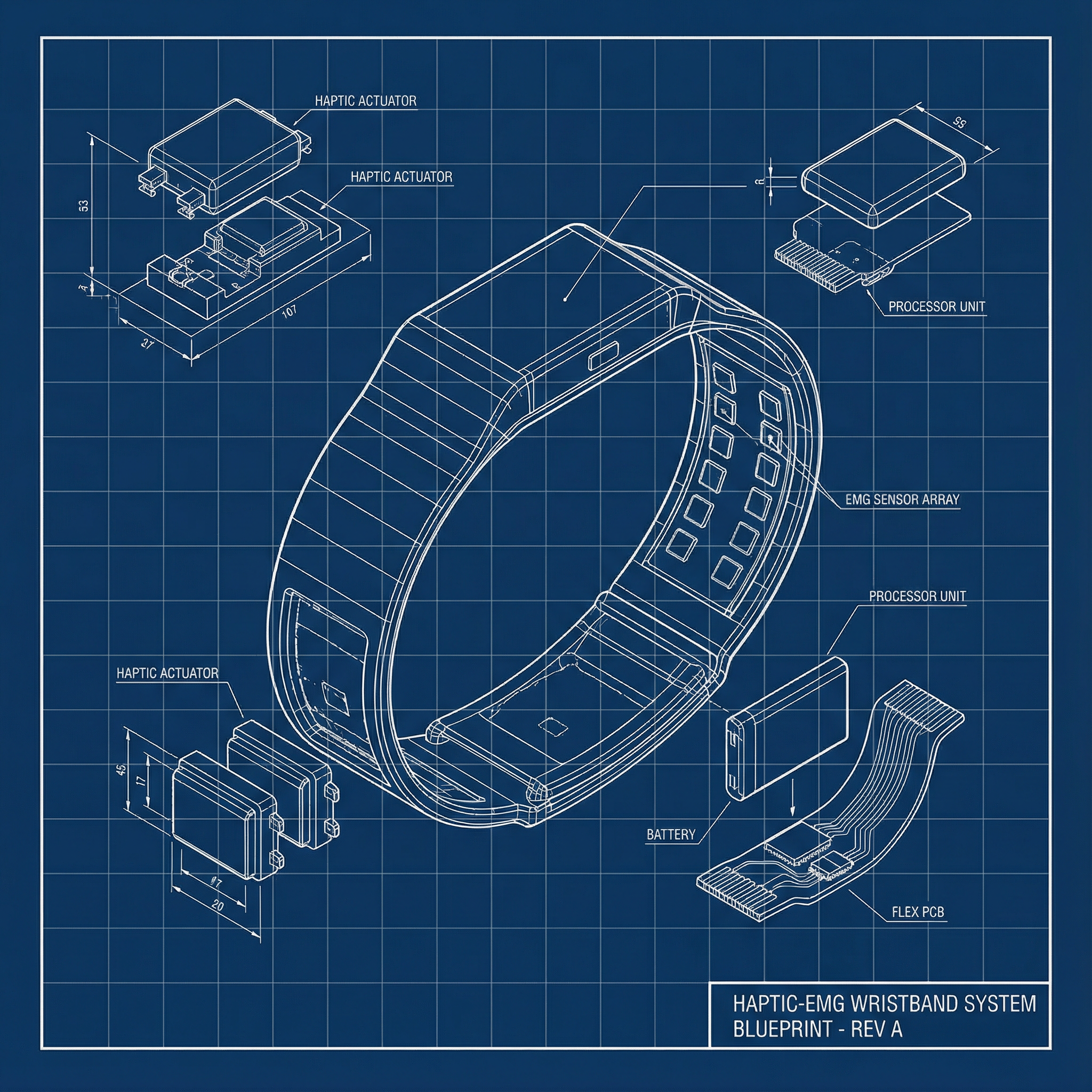

Symbiosis of Cognition & Computation

MonX devices act as the neural bridge, translating human intent into spatial commands. A seamless, non-invasive interface where biological signals merge with the AIXR digital substrate.

Eye-tracking data correlates with attention maps to predict user intent before action.

Micro-vibrations confirm spatial interactions without visual clutter.

Detects finger movements milliseconds before they physically occur.

The Neural Loop

MonX operates on a closed-loop "Perception-Action" cycle. Unlike passive displays, it actively parses the environment using onboard SLAM and VLM (Vision-Language Models) to understand context before displaying information.

1. Perception Layer

Multimodal sensor fusion (LiDAR, RGB, IMU) creates a real-time digital twin of the immediate environment.

2. Context Engine

On-device NPU processes semantic data, identifying objects, text, and spatial relationships with < 50ms latency.

3. Adaptive Interface

Generative UI system that projects relevant information only when needed, minimizing cognitive load.

Ready for Deployment?

MonX is currently in closed beta for research partners and enterprise pilot programs.